Key Milestones in Computing History

1830s - The Analytical Engine

Charles Babbage conceptualized the first mechanical computer, pioneering ideas of programmability and automated calculation.

1946 - ENIAC

The Electronic Numerical Integrator and Computer was the first programmable digital computer, marking the dawn of the electronic age.

1964 - IBM System/360

This family of mainframe computers introduced compatibility across models, setting standards for corporate computing environments.

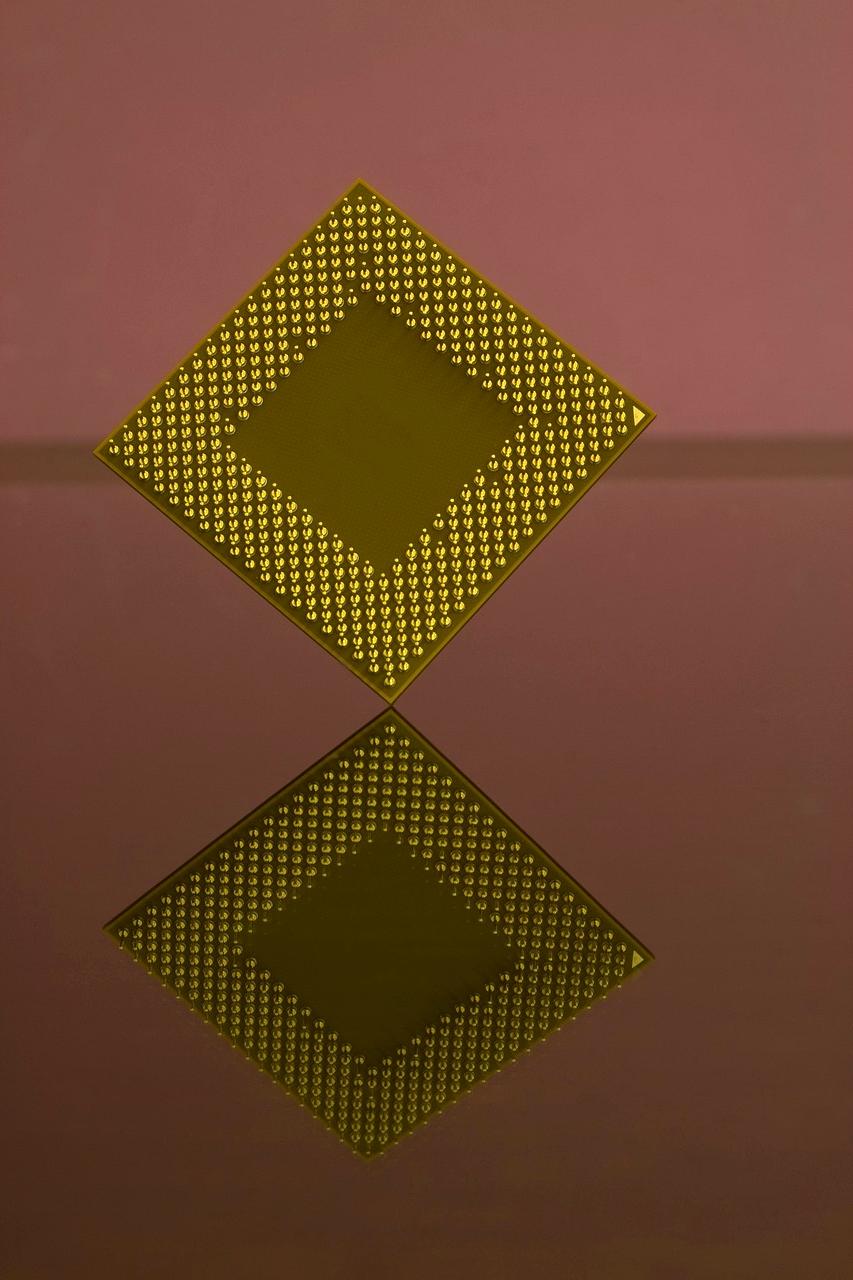

1971 - Intel 4004

The first commercially available microprocessor ignited the personal computing revolution by bringing CPUs onto a single chip.

1976 - Apple I

Apple’s inaugural computer democratized access and helped launch the PC industry with a user-friendly design and assembly kit.

2020s - Quantum Computing Era

Harnessing quantum mechanics to solve complex problems marks the cutting edge of computational power and technological potential.

Foundational Concepts and Breakthroughs

Computing history is marked by transformative innovations that changed the ways we calculate, communicate, and live daily. Early mechanical inventions like the abacus laid the groundwork for more sophisticated analog and digital machines.

The mid-20th century saw the transition from vacuum tubes to transistors, enabling smaller, faster, and more reliable computers. Programming languages and operating systems emerged, making computers versatile tools across industries.

Legacy and the Road Ahead

The heritage of computing devices inspires the future of technology. Innovations like quantum computing, AI, and neural interfaces are built upon foundational principles developed over centuries.

Understanding this history helps us appreciate the rapid pace of innovation and the societal impact of digital transformation, preparing us to shape a more connected and intelligent future.

Reserve Your Quantum Computing Access

Join the exclusive early access program for next-generation computing solutions. Limited spots available for 2025.